Being able to access dynamic content is a key advantage of Selenium. Since the DOM is generated dynamically, Selenium also makes it possible to scrape pages with content created in JavaScript. URL → HTTP request → HTML → Selenium → DOM This results in the following schema illustrating web scraping with Selenium: The resulting changes to the page are reflected in the DOM. For example, clicks can be simulated and forms can be filled out automatically. This standardized interface makes it possible to test user interactions. The browser interprets the page’s source code and generates a Document Object Model (DOM). Instead, the page is loaded in a browser without a user interface. Unlike Scrapy or BeautifulSoup, Selenium does not use the page’s HTML source code. Despite the fact that Selenium itself is not written in Python, the software’s functions can be accessed using Python. While it was originally developed to test websites and web apps, the Selenium WebDriver with Python can also be used to scrape websites. The free-to-use software Selenium is a framework for automated software testing for web applications. Alternatively, you can set up your own web scraping server using the open-source software Scrapyd. As a result, even large websites can be scraped without having to use your own computer and home internet connection. There the spiders can be run on a schedule. In addition, existing spiders can be uploaded to the Scrapy cloud. The spiders are controlled using this Scrapy shell. In addition to the core Python package, the Scrapy installation comes with a command-line tool. Object-oriented programming is used for this purpose. Each spider is programmed to scrape a specific website and crawls across the web from page to page as a spider is wont to do. These are small programs based on Scrapy. The core concept for scraper development with Scrapy are scrapers called web spiders. This results in the following schema illustrating web scraping with Scrapy: The Python web scraping tool Scrapy uses an HTML parser to extract information from the HTML source code of a page. This will allow you to directly familiarize yourself with the scraping process. For some hands-on experience, you can use our tutorial on web scraping with Python based on BeautifulSoup. Here we will introduce you to three popular tools: Scrapy, Selenium, and BeautifulSoup. There are multiple sophisticated tools for performing web scraping with Python. This ecosystem includes libraries, open-source projects, documentation, and language references as well as forum posts, bug reports, and blog articles.

In addition to its general suitability, Python has a thriving programming ecosystem. Furthermore, Python is an established standard for data analysis and processing. Python is also effective for word processing and web resource retrieval, both of which form the technical foundations for web scraping. This is particularly easy to do with Python.

If the structure of the page is changed, the scraper must be updated.

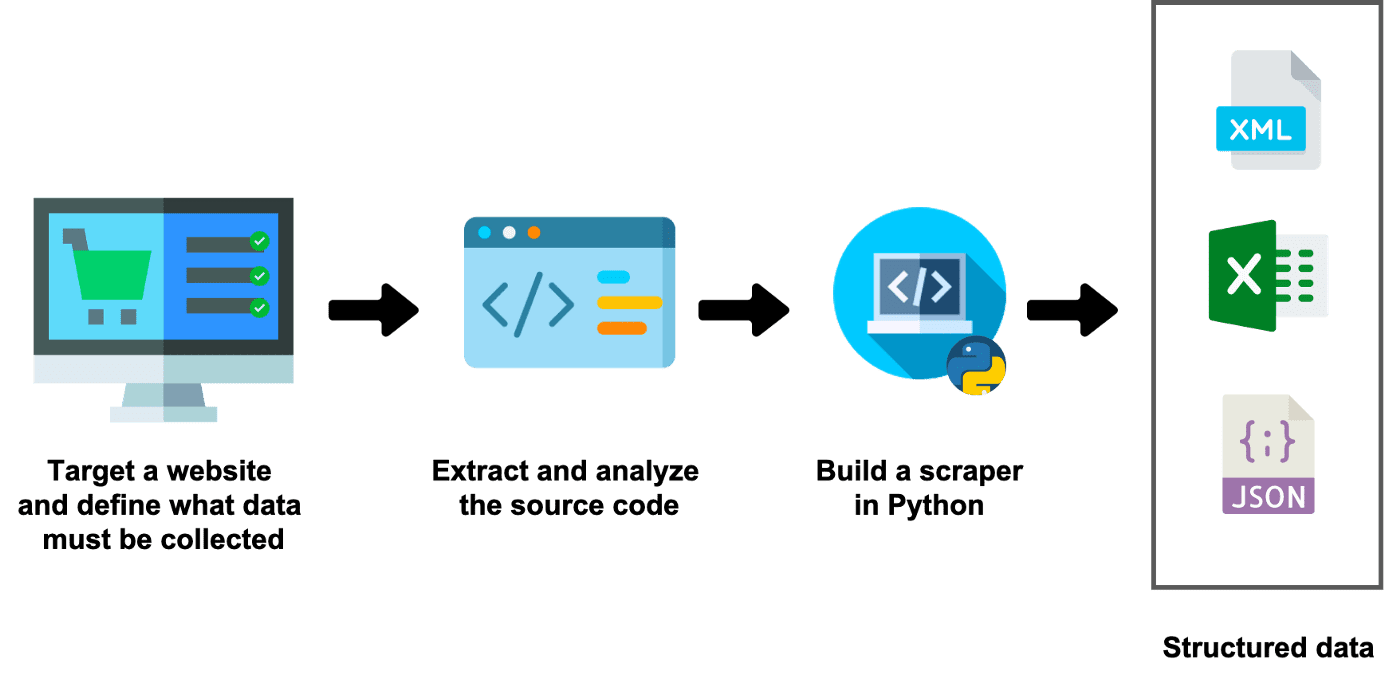

A web scraper is programmed according to the specific structure of a page. For example, the website’s design may be modified or new page components may be added. Since websites are constantly being modified, web content changes over time. The popular programming language Python is a great tool for creating web scraping software.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed